Google AI and Princeton discover this about Deep Learning

Their discovery changes what we know about Deep Learning behavior

Much of an ML model’s learning results depend on the model’s learning rate. The learning rate is a tuning parameter in an optimization algorithm that determines the step size at each iteration while moving toward a minimum of a loss function. Since it influences the extent to which newly acquired information overrides old information, it metaphorically represents the speed at which a machine learning model “learns”.

The importance of Learning Rate can’t be underestimated. That is why there is a lot of research towards both discovering new learning rate schedules (how LR should change over time) and comparing existing ones. Researchers at Google AI, Tel Aviv University, and Princeton collaborated together to write Disentangling Adaptive Gradient Methods from Learning Rates. The paper looks at “how adaptive gradient methods interact with the learning rate schedule.” In this article, I will share some interesting takeaways from the paper that might help you in your ML journeys. Share your thoughts on the paper and the ideas you found most interesting through the comments or DMs. It’s a good way for us all to learn/get new ideas from each other. If you’re hoping to work in Machine Learning, check out this video, that explains what Machine Learning projects will be most helpful in accelearting your growth, and getting a job.

Understanding the context

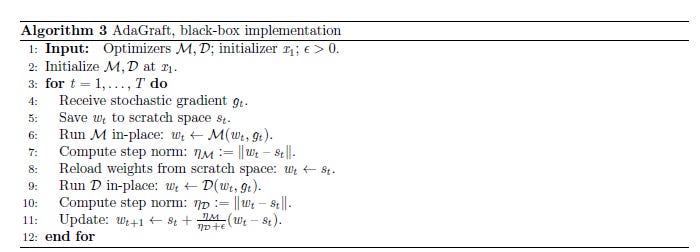

To understand the paper, it is important to understand the basics of the theory they are operating with. Generally it is asssumed that learning rate schedulers like Adam are great because they are able to compute two aspects, the Magnitude and the Direction. Think of the magnitude being the absolute value of the step size, and direction equating to the direction the step is taken in. Remember, since a lot of input data in Machine Learning is high dimensional, choosing the right direction to traverse is not a trivial task. The image below is a good overview of second-moment optimizers. Don’t worry if you can’t understand everything, just notice how we are changing the different values based on the gradient we calculate.

The authors in the paper attempt to isolate how important the choosing the correct step size is in the learning behavior. They do so by proposing a grafting experiment. Instead of taking the magnitude and direction from the same optimizer, we take them from two different optimizers.

Then by comparing the behavior of the grafted optimizers across a variety of tasks, we can check how important the step size is to the overall performance of the model learning. If we see relatively consitent performance across tasks where the magnitude used the same optimizer protocol (despite directions being different), we can conclude that Step Size is most important. And vice versa.

Performance on Computer Vision

A darling subfield of Google AI, no Google paper is complete without a Computer Vision task tested. The setup details are as follows, “We ran all pairs of grafted optimizers on a 50-layer residual network [HZRS16] with 26M parameters, trained on ImageNet classi cation [DDS+09]. We used a batch size of 4096, enabled by the large-scale training infrastructure, and a learning rate schedule consisting of a linear warmup and stepwise exponential decay.”

The results are very interesting. We see that the values each row are relatively consistent (both top-1 and top-5 accuracy) across the rows (step size from the same optmizer). There is quite a bit of variance across the columns (direction from the same optimizer). This leads to a very interesting conclusion. The step size seems to be the dominating factor for model learning behavior. This is articulated by the authors through the statement, “Figure 1 shows at a glance our main empirical observation: that the shapes of the training curves are clustered by the choice of M, the optimizer which supplies the step magnitude.”

This is intersting enough on it’s own. But when you think about it, this leads to an interesting application. Imagine a dataset where we have worse 1 optimizer being worse worse than another. However, Optimizer 1 is cheaper to run. So we use Optimizer 2 to calculate the step size, and graft that onto 1. This would improve performance on 1, while being cheaper than Optimizer 2. Look at the findings for AdaGrad for a proof of concept of this idea.

For those interested in the implementation of the graft, this is in the appendix. Check it out for a lot of the little configuration/technical details. It will be helpful if you want to implement something similar.

NLP Performance

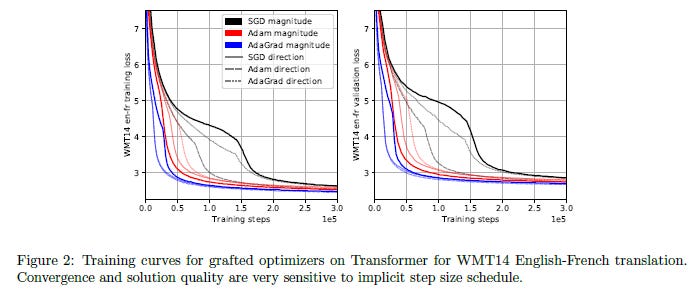

Next we move on to a natural language processing task. According to the authors, “For a realistic large-scale NLP setting, we trained all grafted optimizers on a 6-layer Transformer network [VSP+17] with 375M parameters, on the WMT14 English-French translation task, which has 36.3M sentence pairs. Again, we use a large batch size (384 sequences), enabling very robust training setups. More details can be found in Appendix C.3.” The performance is seen below

Looking at the table below (conducted over a seperate task), we see that once again, the values across the rows are a lot more stable than the values across columns (though this task has more stable performance overall). This once again implies the idea that magnitude is a stronger factor than direction.

In fact, we see the best result comes from a grafted performer. The authors even highlight this in the paper with their comments, “Interestingly, beyond demonstrating the same clustering of performance metrics by the choice of M, these experiments show that it is possible for a grafted optimizer to outperform both base methods M and D; see Figure 2 for loss curves, and Table 3.3 for the downstream BLEU metric, with which our results are consistent. Again, we are not making claims of categorical superiority under careful tuning, and only thepower of bootstrapping; we stress that we did not even tune the global learning rate scalar.”

The combination of the results across the both Computer Vision and NLP is pretty convincing to me. It was quite surprising how comprehensively we could show the dominance of the step size. The potential for boostrapping is also fascinating, and it would be interesting if we could build evaluation protocols to be able to identify best combinations for a particular problem.

If you liked this article, check out my other content. I post regularly on Medium, YouTube, Twitter, and Substack (all linked below). I focus on Artificial Intelligence, Machine Learning, Technology, and Software Development. If you’re preparing for coding interviews check out: Coding Interviews Made Simple.

For one-time support of my work following are my Venmo and Paypal. Any amount is appreciated and helps a lot:

Venmo: https://account.venmo.com/u/FNU-Devansh

Paypal: paypal.me/ISeeThings

Reach out to me

If that article got you interested in reaching out to me, then this section is for you. You can reach out to me on any of the platforms, or check out any of my other content. If you’d like to discuss tutoring, text me on LinkedIn, IG, or Twitter. If you’d like to support my work, using my free Robinhood referral link. We both get a free stock, and there is no risk to you. So not using it is just losing free money.

Check out my other articles on Medium. : https://rb.gy/zn1aiu

My YouTube: https://rb.gy/88iwdd

Reach out to me on LinkedIn. Let’s connect: https://rb.gy/m5ok2y

My Instagram: https://rb.gy/gmvuy9

My Twitter: https://twitter.com/Machine01776819

My Substack: https://codinginterviewsmadesimple.substack.com/

Get a free stock on Robinhood: https://join.robinhood.com/fnud75

Google AI and Princeton discover this about Deep Learning was originally published in Geek Culture on Medium, where people are continuing the conversation by highlighting and responding to this story.