Undertstanding Kafka [System Design Sundays]

You will see this technology a lot as you read engineering blogs. Very powerful for Cloud Technology

Happy Sunday all,

You will notice that the color scheme on the website is different. I received feedback from a reader that he found it hard to see the bolded text on the previous colors. So we are switching up our aesthetics for a bit. If you have any recommendations, my DMs are always open to suggestions. Just comment, reply to this email or use any of my links to reach out.

Recently, I was reading this excellent blog post by the DoorDash engineering team titled, Building Faster Indexing with Apache Kafka and Elasticsearch. If you a lot of engineering blogs, you will find that tons of people use this Kafka in their projects. This seemed like the perfect time to cover the technology.

Highlights

This post will cover the following topics-

What is Kafka- Apache Kafka is an open-source distributed event streaming platform. Streaming Data refers to data generated by thousands of data sources. This is usually sent in simultaneously. For example, DoorDash has thousands of customers sending in their orders at any given time. Kafka helps you with this data.

Functions of Kafka- Kafka is well known for 3 major abilities: 1) Publish and subscribe to streams of records; 2) Effectively store streams of records in the order in which records were generated, and; 3)Process streams of records in real-time.

5 APIs of Kafka- Kafka has 5 APIs- admin, producer, consumer, streams, and connector. We’ll describe them briefly.

For a quick introduction, this IBM cloud video, “What is Kafka?”, is your best friend. If you want a lot of the nerdy deets, then Kafka has some legendary documentation. AWS and IBM also have great writeups on Kafka which I will be referencing here.

Let’s get right into it.

What is Apache Kafka? Why Apache Kafka?

Kafka was created by LinkedIn as a high-throughput message broker and later given to the Apache foundation. It is an open-source tool, used by some of the biggest names in the world.

An event is any significant occurrence or change in state for system hardware or software (source, Redhat). Kafka lets software engineers build real-time and event-driven applications. A classic example of such an app is Netflix. Netflix needs to stream data to you in real-time (imagine having to wait 5 minutes for a movie to load). It needs to be responsive to your clicks(events).

With Cloud Computing and the scale at which most big firms operate, we tend to have a lot of events generated concurrently. Think of how many people watch Netflix throughout the world at any given time. Your pipelines need to be able to handle all the events safely and efficiently.

Kafka is a wonderful tool. However, it is important to remember that it is just a tool. Tricky interviewers might give you a question where you are tempted to use Kafka. However, this is not the correct approach. Let’s take a look at when you should Kafka next.

When would you use Kafka?

Kafka is used primarily for creating two kinds of applications:

Real-time streaming data pipelines: These are applications designed to move millions of data or event records between enterprise systems, at scale and in real-time. Kafka lets you move them reliably, without you having to worry about corruption, duplication of data, and other problems that typically occur when moving such huge volumes of data.

Real-time streaming applications: Applications that are driven by record or event streams and that generate streams of their own. We are all familiar with these from IG updating the likes and comments, to YouTube funneling you down a new rabbit hole based on a few clicks.

What makes Kafka ideal for these applications

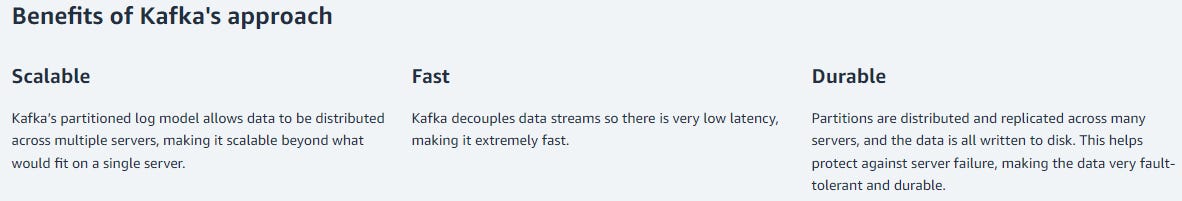

As discussed Kafka has 3 major strengths that have made it so powerful. They are the abilities to-

Publish and subscribe to streams of records- This allows multiple services to interact with each other and gain information from each other while remaining decoupled

Store streams of records in the order in which records were generated- Imagine I decide to start doing in-person seminars. Since I am the most woke person on the internet, the tickets will sell out immediately. Loyal followers like you will probably be the first ones on the website to buy the tickets. We want to make sure you get it, and not some pleb who was 5 minutes late.

Process streams of records in real time- Going back to DoorDash, imagine how much worse the app would be if the food you ordered now only got sent to the food place 3 hours later.

Take a second to think. Chances are most of the apps you’ve interacted with have used at least one of these properties. This is what makes Kafka so prevalent in System Design.

Now let’s talk about the nerdy stuff. Time for a quick intro into the mechanics of Kafka. Specifically, the 5 APIs of Kafka.

The 5 APIs of Kafka

In the beginning, there was peace among the 5 nations. Then the fire nation invaded.

…Looks like I got my scripts messed up. Let’s take this over the top. Kafka has 5 amazing APIs. The team at Kafka wrote an amazing introduction for their APIs here, so here it is, straight from the horse’s mouth

Kafka has five core APIs for Java and Scala:

The Admin API to manage and inspect topics, brokers, and other Kafka objects.

The Producer API to publish (write) a stream of events to one or more Kafka topics.

The Consumer API to subscribe to (read) one or more topics and to process the stream of events produced to them.

The Kafka Streams API to implement stream processing applications and microservices. It provides higher-level functions to process event streams, including transformations, stateful operations like aggregations and joins, windowing, processing based on event-time, and more. Input is read from one or more topics in order to generate output to one or more topics, effectively transforming the input streams to output streams.

The Kafka Connect API to build and run reusable data import/export connectors that consume (read) or produce (write) streams of events from and to external systems and applications so they can integrate with Kafka. For example, a connector to a relational database like PostgreSQL might capture every change to a set of tables. However, in practice, you typically don't need to implement your own connectors because the Kafka community already provides hundreds of ready-to-use connectors.

Clearly, Kafka has all the right tools to help the internet. And the results speak for themselves. Almost every major platform uses it in some way. And now you have all the tools to use it too.

If you have enjoyed this post so far, please make sure you like it (the little heart button in the email/post). I also have a special request for you.

***Special Request***

This newsletter has received a lot of love. If you haven’t already, I would really appreciate it if you could take 5 seconds to let Substack know that they should feature this publication on their pages. This will allow more people to see the newsletter.

There is a simple form in Substack that you can fill up for it. Here it is. Thank you.

https://docs.google.com/forms/d/e/1FAIpQLScs-yyToUvWUXIUuIfxz17dmZfzpNp5g7Gw7JUgzbFEhSxsvw/viewform

To get your Substack URL, follow the following steps-

Open - https://substack.com/

If you haven’t already, log in with your email.

In the top right corner, you will see your icon. Click on it. You will see the drop-down. Click on your name/profile. That will show you the link.

You will be redirected to your URL. Please put that in to the survey. Appreciate your help.

In the comments below, share what topic you want to focus on next. I’d be interested in learning and will cover them. To learn more about the newsletter, check our detailed About Page + FAQs

If you liked this post, make sure you fill out this survey. It’s anonymous and will take 2 minutes of your time. It will help me understand you better, allowing for better content.

https://forms.gle/XfTXSjnC8W2wR9qT9

I see you living the dream.

Go kill all and Stay Woke,

Devansh <3

To make sure you get the most out of System Design Sundays, make sure you’re checking in the rest of the days as well. Leverage all the techniques I have discovered through my successful tutoring to easily succeed in your interviews and save your time and energy by joining the premium subscribers down below. Get a discount (for a whole year) using the button below

Reach out to me on:

Instagram: https://www.instagram.com/iseethings404/

Message me on Twitter: https://twitter.com/Machine01776819

My LinkedIn: https://www.linkedin.com/in/devansh-devansh-516004168/

My content:

Read my articles: https://rb.gy/zn1aiu

My YouTube: https://rb.gy/88iwdd

Get a free stock on Robinhood. No risk to you, so not using the link is losing free money: https://join.robinhood.com/fnud75