How Linearity Makes LLMs more Energy Efficient[Math Mondays]

How Modern Neural Networks are leveraging linear operations to speed up computations

Hey, it’s your favorite cult leader here 🐱👤

Mondays are dedicated to theoretical concepts. We’ll cover ideas in Computer Science💻💻, math, software engineering, and much more. Use these days to strengthen your grip on the fundamentals and 10x your skills 🚀🚀.

To get access to all the articles and support my crippling chocolate milk addiction, consider subscribing if you haven’t already!

p.s. you can learn more about the paid plan here.

If you study the recent trends in LLMs, one interesting pattern pops up: more and more energy-efficient LLMs are relying on Linear Operations.

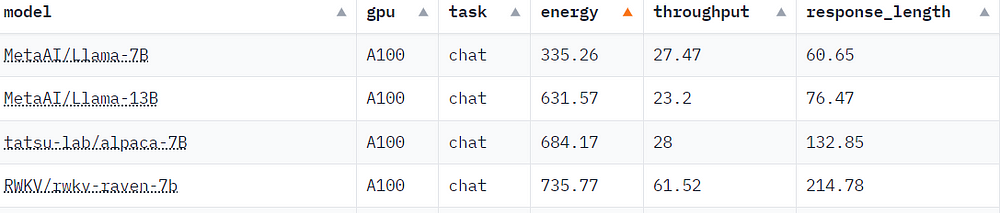

This is quite a reversal, given how the original benefit of Deep Learning based Neural Networks was their ability to model non-linear relationships. However, recently LLM providers are becoming increasingly concerned about the energy requirements for running LLMs at scale. This has seen an increased focus on energy-efficient LLMs. This has been the motivation behind the development of architecture like Mamba and RWKV. These architectures demonstrate the competitive performance with LLMs for a fraction of the cost.

In this piece, we will be covering the following ideas-

What is Linearity?

Why Deep Learning has benefited from non-linearity

How Linearity enables more efficient LLMs.

As someone who has been concerned about the carbon emissions from LLMs for a while, I’m pretty excited to see how this trend unfolds and how it leads to lower energy usage. Let’s get into it.

What is Linearity in Computer Science and AI

In a mathematical context, linearity describes a relationship where the output changes proportionally to changes in the input. Mathematically, a linear function satisfies two properties:

Additivity: The function applied to a sum is equal to the sum of the function applied individually to each term: f(x + y) = f(x) + f(y)

Homogeneity: The function applied to a scaled input equals the function applied to the input, then scaled: f(cx) = c * f(x)

Linearity offers simplicity and predictability, making it a cornerstone in classical mathematical modeling. Linear computations can be done very quickly, making them very scalable. However, their simplicity ends up being a huge drawback, as Linearity is incapable of handling complex relationships between data points. This is where Deep Learning and Neural Networks became a dream team.

Deep Learning and the Necessity of Non-Linearity

Contrary to popular opinion, Deep Learning and Neural Networks are not the same thing. There are linear neural networks. However, non-linearity was the piece that enabled the rise of neural networks to become the face of Machine Learning. Let’s talk about how.

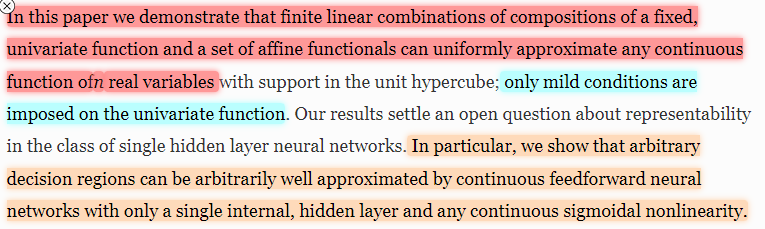

The world is inherently non-linear. Consider image recognition: the relationship between pixel intensities and whether the image contains a cat is highly complex and non-linear. This complexity can’t really be modelled by the linear systems. But all is not lost. Take a look at the paragraph below, taken from the Approximation by superpositions of a sigmoidal function

Turns out that we can approximate any continuous function by taking a combination of non-linear functions. I’m highlighting continuous b/c many people misunderstand this theorem to assume that you can approximate any function. This faulty assumption has wasted a lot of time, energy, and VC money. Very big deep networks work because they can try a lot of non-linear functions and combine them in various ways. This is what allows us to throw all kinds of data at Deep Learning networks and get a somewhat functional solution (PS: this is not a good idea, and there are almost always better ways to go about it. I’m simply saying you can do this, not that you should).

More specifically, deep learning heavily relies on non-linear activation functions (The activation function of a node in an NN determines the output of the node based on its individual inputs and weights). These non-linear functions allow neural networks to bend and twist their internal representations, capturing highly complex relationships. Some prominent activation functions are shown below (source).

Modern LLMs are looking to combine the benefits of non-linearity and linearity by mixing them strategically.

Making LLMs more Efficient through Linearity

Traditional LLMs are based on Transformers, which are a special kind of neural network that can be scaled to very large sizes. The secret behind the scale of transformers is their attention mechanism, which allows them to parallelize training (utilize multiple computing clusters at once) while also maintaining long-term relationships between sequences.

However, the attention mechanism is very costly. Attention looks at every token, for every decision- making the entire process very bloated. This results in operations with very high memory and time costs-

There are more efficient architectures (RNNs), but they cannot parallelize training, limiting their performance, since they have to process tokens one at a time (and thus can’t be trained in parallel). This gap is what modern LLMs like Mamba and RWKV seek to address. They build RNN-like models that have efficient inference, with a slight twist. Instead of adding non-linearity at every step, they only add non-linearity at the last steps. Since most of the computations are linear, they can be done in parallel. These are then fed to non-linear blocks, which can model the complexity.

By removing non-linearity at every step, we are reducing the expressivity of the system, trading it for efficiency gains. However, we have seen that at a large scale + good data- this isn’t a huge issue since different networks converge to similar performance. Given how expensive LLMs are to run, the few points lost in performance are made for by the very strong energy reduction. I’d happily take the reduced emissions if all it means are is slightly worse performance on benchmarks (which we can also improve by better engineering).

If you want to learn more about the math/engineering details of how we replace non-linearities to balance the cost-performance tradeoff, check out my breakdown of RWKV on AI Made Simple. You can find it below

Ready to simplify your tech journey? Subscribe to Technology Made Simple and get clear, actionable insights to boost your tech skills and career. Forget wasting time on endless tutorials — find everything you need in one place.

Special Offer: Save 20% on your first year! Here’s what you get:

Monthly Plan: 640 INR (8 USD) [Originally 800 INR]

Yearly Plan: 6400 INR (80 USD) [Originally 8000 INR]

Still hesitant? Try risk-free with our 6-month money-back guarantee. If you’re not satisfied, get a full refund, no questions asked! All you have to do is message me.

Reach out to me

Use the links below to check out my other content, learn more about tutoring, reach out to me about projects, or just to say hi.

Small Snippets about Tech, AI and Machine Learning over here

AI Newsletter- https://artificialintelligencemadesimple.substack.com/

My grandma’s favorite Tech Newsletter- https://codinginterviewsmadesimple.substack.com/

Check out my other articles on Medium. : https://rb.gy/zn1aiu

My YouTube: https://rb.gy/88iwdd

Reach out to me on LinkedIn. Let’s connect: https://rb.gy/m5ok2y

My Instagram: https://rb.gy/gmvuy9

My Twitter: https://twitter.com/Machine01776819

![Activation Functions in Neural Networks [12 Types & Use Cases] Activation Functions in Neural Networks [12 Types & Use Cases]](https://substackcdn.com/image/fetch/$s_!DOBM!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fbbdd8c68-6797-4503-b345-d818a3a7d45c_886x1160.jpeg)